Can AI help me (Us) Build Better Chess Training Coach?! Part-2

LLAMA you are stupid, Stockfish you are blind.In Part1 - https://lichess.org/@/Busbar/blog/can-ai-help-me-us-build-better-chess-training-part-1/TtEM87xh

I explained the rationale behind my idea and why I needed a better kind of training—a coach that could present real-world, imperfect positions to help me sharpen my positional understanding in practical situations.

In this part, I’ll walk you through how I started building exactly that...

LLAMA, Create a Positional Setup.

When I kicked off the project, my initial thought was simple: “Why not use LLAMA to generate strategic positions that challenge me?” It sounded straightforward—and in a way, it was.

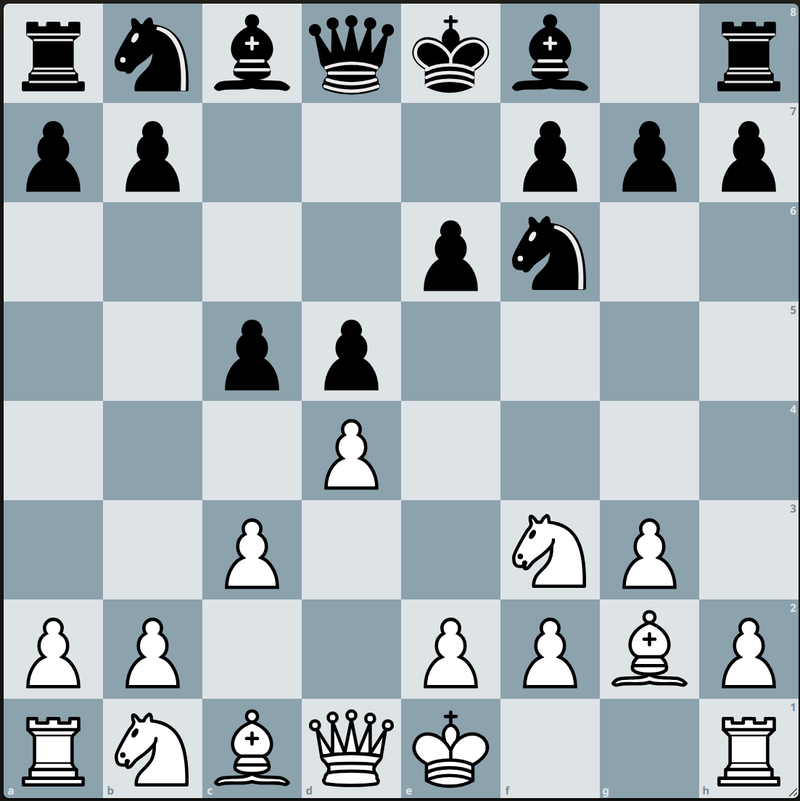

LLAMA began by generating this position:

Then LLAMA gave me the prompt:

"White to move. Gain space and restrict your opponent."

And the suggested solution?

The move d4–d5 gains space in the center and restricts Black’s knight from jumping to c6 or e6. It also makes it harder for Black to organize counterplay.

So, LLAMA’s "correct move" was... d5?!

I blinked.

d5?!

Bro... there’s no pawn on d4. There’s literally no legal move to d5 in this position.

I tilted my head, scrolled back up, checked the board again. Nope. Totally illegal.

So I asked myself: How is LLAMA generating a nice position, but giving such trash suggestions? And here's the core of it...

Let’s Talk About LLAMA (No, Not the Animal)

LLAMA is what we call a large language model (LLM)—a type of AI trained on massive amounts of text data. That’s the key word here: text.

It doesn’t play chess like Stockfish. It doesn't simulate boards or calculate variations. Instead, it reads patterns in text, like chess books, online game commentary, annotated PGNs, even forums.

So when you ask LLAMA, “What’s the best move here?” it’s not calculating like a chess engine. It’s guessing based on what it’s seen in the past that looks similar.

It’s like asking a well-read student:

“You’ve read thousands of books on chess. What would you play here?”

They might give you something insightful... or something totally off if the pattern doesn’t match.

That’s the LLAMA effect.

Now Meet Stockfish: The Calculation Monster

Stockfish is not an LLM. It’s a chess engine—specifically designed to evaluate positions, simulate moves, and calculate concrete outcomes.

It doesn’t “guess.” It crunches numbers, evaluates millions of move sequences per second, and gives you the best lines based on:

- Material evaluation

- King safety

- Positional factors like outposts, pawn structure, open files

- Deep, layered calculation of tactics and strategies

Where LLAMA might say “Play d5, it sounds good,”

Stockfish says, “I looked 25 moves ahead. d5 is literally impossible.”

And that’s the key difference:

| Feature | LLAMA (Language Model) | Stockfish (Chess Engine) |

|---|---|---|

| Input | Text and patterns from human language | Board position (FEN/PGN) |

| Output | A prediction based on language patterns | The best move based on concrete calculation |

| Strength | Creativity, explanations, narrative | Accuracy, depth of calculation |

| Weakness | Hallucinates illegal or illogical moves | Doesn’t explain ideas in natural language |

| Best Used For | Puzzle storytelling, hint writing | Verifying moves, ensuring puzzle soundness |

Why LLAMA Alone Isn’t Enough (Yet)

LLAMA is brilliant at generating ideas. You can ask it to create a scenario involving a space advantage, a knight maneuver, or a kingside pawn storm—and it’ll paint a great story.

But it can’t always guarantee:

- The position is legal

- The move is valid

- The plan is sound

Because, again, it’s working with language, not logic.

So, when I tried to train myself using just LLAMA’s output, I quickly ran into these weird moments:

- Legal moves that weren’t legal

- Good-looking suggestions that made no strategic sense

- Engine evaluations that totally contradicted what LLAMA said

So What to do ?!

I realized I needed the best of both worlds.

I needed Stockfish—the cold, calculating beast that sees 30 moves ahead but can’t say a single word.

And I needed LLAMA—a creative powerhouse that can write poetry about a passed pawn but has no idea if it's even legal.

So, I had to build something to glue them together.

Enter: the Pipeline.

Now, “pipeline” is just a fancy word for a whole lot of Python code. Luckily, Python is a dream for chess projects—it supports Stockfish via python-chess, and it works perfectly with LLAMA using llama-cpp. So I stitched it all together like this:

- Download the massive Lichess PGN database

Yep. We’re talking millions of real games—mistakes, brilliance, and everything in between. - Pick interesting games

Filter by rating, opening, or pure randomness. I wanted variety. - Analyze each game with Stockfish

Focus on the middlegame. That’s where the juicy, quiet mistakes live. Not blunders—just the subtle stuff we club players miss. - Extract the key moments

Let Stockfish highlight questionable moves and suggest better ones—this is the learning goldmine. - Send those moments to LLAMA

Ask LLAMA: “Explain this. Make it human. Turn it into a puzzle with clear explanations, layered hints, and engaging commentary.” - Output it all in a clean JSON format

Structured puzzles ready to be dropped into our interactive chess board on the site.

Clean. Scalable. Beautiful.

Easy, right?

If you’re curious to see how it works—or you want to train smarter, not harder—explore www.aipawns.com.

Part 3 is coming soon, where I’ll dive into the code, the structure, and how this system can scale to generate hundreds of strategic puzzles customized for real learners like us.

Till then—stay sharp, stay curious, and stay safe.